Connect Your App to Agent Flows

The Prismatic MCP flow server allows you to connect your app's AI agent or chatbot to your agent flows.

Authentication for embedded customer users

If your AI client is embedded in your application (for example, you've built a chat bot with the AI SDK), you will need to create an embedded JWT for your customer user and provide that JWT as a bearer token to Prismatic's MCP flow server.

Node AI SDK

If you're building your own AI client using the AI SDK, you can use the createMCPClient function to register Prismatic's MCP flow server with the SDK.

You'll need to provide a StreamableHTTPClientTransport that points to Prismatic's MCP flow server, and also provide an embedded JWT for the customer user (it can be the same JWT you use for embedding Prismatic).

When invoking your LLM with streamText, provide the tools fetched from Prismatic's MCP flow server to the tools option.

import { openai } from "@ai-sdk/openai";

import { StreamableHTTPClientTransport } from "@modelcontextprotocol/sdk/client/streamableHttp.js";

import {

streamText,

experimental_createMCPClient as createMCPClient,

} from "ai";

// Allow streaming responses up to 30 seconds

export const maxDuration = 30;

// Fetch all tools available from Prismatic's MCP server given an embedded customer user's access token

const getTools = async (prismaticAccessToken: string) => {

const transport = new StreamableHTTPClientTransport(

new URL("https://mcp.prismatic.io/mcp"),

{

requestInit: {

headers: {

Authorization: `Bearer ${prismaticAccessToken}`,

},

},

},

);

const mcpClient = await createMCPClient({

transport: transport,

onUncaughtError(error) {

console.error("Error in MCP client:", error);

throw error;

},

});

// Remove the "get-me" tool if it exists

const { "get-me": getMe, ...tools } = await mcpClient.tools();

return tools;

};

// Handle chat completion requests from a chat bot

export async function POST(req: Request) {

const { messages } = await req.json();

const mcpTools = await getTools(

(req.headers.get("Authorization") ?? "").replace("Bearer ", ""),

);

const result = streamText({

model: openai("gpt-4o"),

messages,

tools: { ...mcpTools },

maxSteps: 20,

onError: (error) => {

console.error("Error in AI response:", error);

throw error;

},

});

return result.toDataStreamResponse();

}

A full example Next.js app is available on GitHub.

- util/tools.ts is a helper to fetch tools from Prismatic's MCP flow server.

- app/api/chat/route.ts is the API route that handles chat completions.

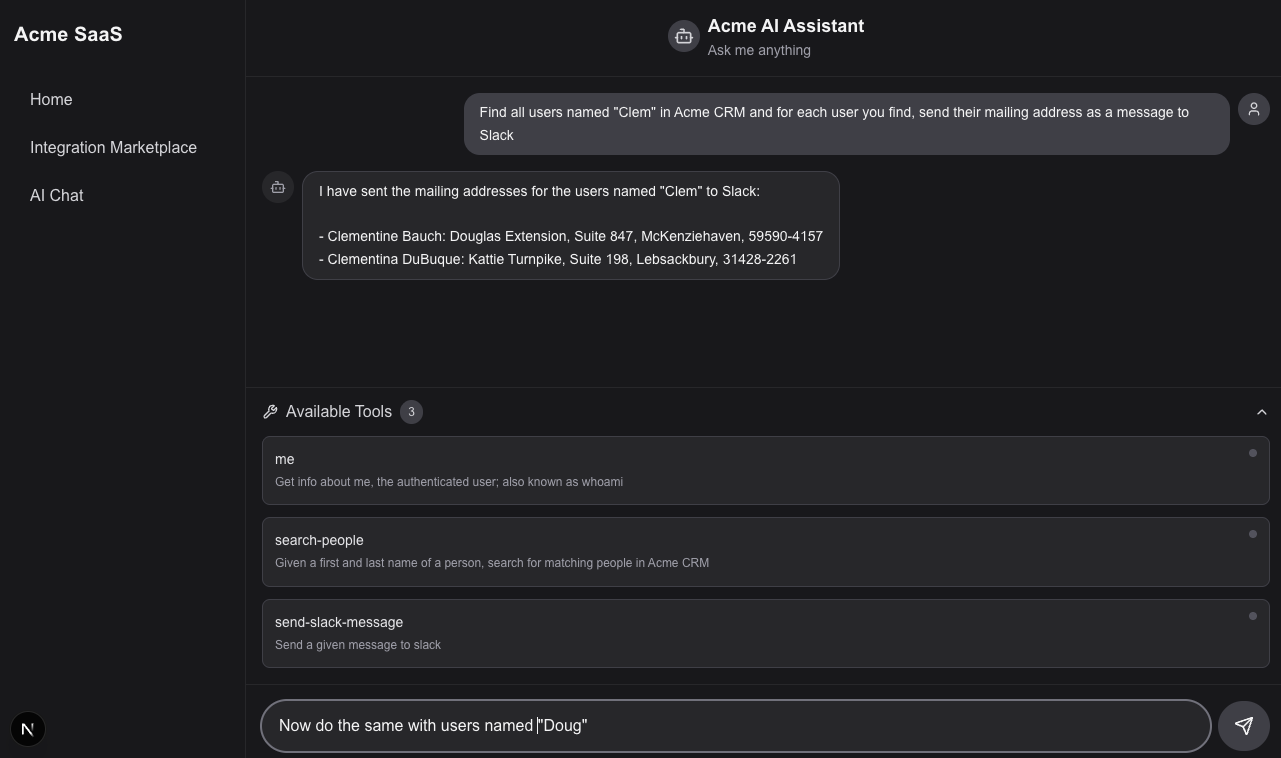

- app/ai-chat/page.tsx is the front-end chat UI.